2025

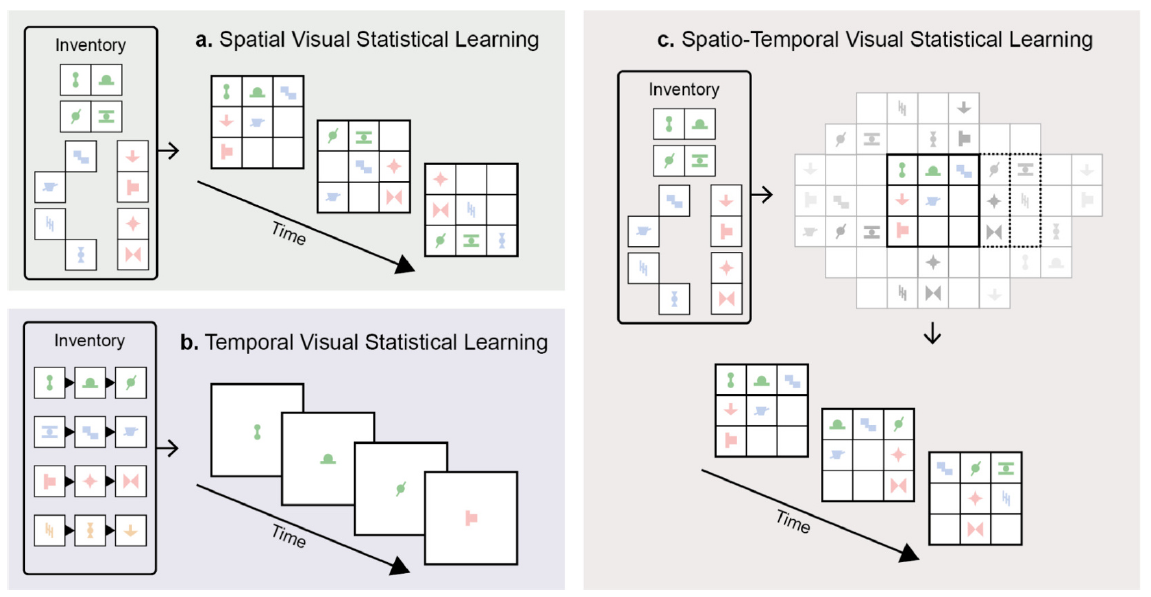

Visual Statistical Learning (VSL) is classically investigated in a restricted format, either as temporal or spatial VSL, and void of any effect or bias due to context. However, in real-world environments, spatial patterns unfold over time, leading to a fundamental intertwining between spatial and temporal regularities. In addition, their interpretation is heavily influenced by contextual information through internal biases encoded at different scales. Using a novel spatio-temporal VSL setup, we explored this interdependence between time, space, and biases by moving spatially defined patterns in and out of participants’ views over time in the presence or absence of occluders.

First, we replicated the classical VSL results in such a mixed setup. Next, we obtained evidence that purely temporal statistics can be used for learning spatial patterns through internal inference. Finally, we found that motion-defined and occlusion-related context jointly and strongly modulated which temporal and spatial regularities were automatically learned from the same visual input. Overall, our findings expand the conceptualization of VSL from a mechanistic recorder of low-level spatial and temporal co-occurrence statistics of single visual elements to a complex interpretive process that integrates low-level spatio-temporal information with higher-level internal biases to infer the general underlying structure of the environment.

2024

Transfer learning, the re-application of previously learned higher-level regularities to novel input, is a key challenge in cognition. While previous empirical studies investigated human transfer learning in supervised or reinforcement learning for explicit knowledge, it is unknown whether such transfer occurs during naturally more common implicit and unsupervised learning and if so, how it is related to memory consolidation.

We compared the transfer of newly acquired explicit and implicit abstract knowledge during unsupervised learning by extending a visual statistical learning paradigm to a transfer learning context. We found transfer during unsupervised learning but with important differences depending on the explicitness/implicitness of the acquired knowledge. Observers acquiring explicit knowledge during initial learning could transfer the learned structures immediately. In contrast, observers with the same amount but implicit knowledge showed the opposite effect, a structural interference during transfer. However, with sleep between the learning phases, implicit observers switched their behaviour and showed the same pattern of transfer as explicit observers did while still remaining implicit. This effect was specific to sleep and not found after non-sleep consolidation. Our results highlight similarities and differences between explicit and implicit learning while acquiring generalizable higher-level knowledge and relying on consolidation for restructuring internal representations.

Current studies investigating electroencephalogram correlates associated with categorization of sensory stimuli (P300 event-related potential, alpha event-related desynchronization, theta event-related synchronization) typically use an oddball paradigm with few, familiar, highly distinct stimuli providing limited insight about the aspects of categorization (e.g., difficulty, membership, uncertainty) that the correlates are linked to. Using a more complex task, we investigated whether such more specific links could be established between correlates and learning and how these links change during the emergence of new categories. In our study, participants learned to categorize novel stimuli varying continuously on multiple integral feature dimensions, while electroencephalogram was recorded from the beginning of the learning process. While there was no significant P300 event-related potential modulation, both alpha event-related desynchronization and theta event-related synchronization followed a characteristic trajectory in proportion with the gradual acquisition of the two categories. Moreover, the two correlates were modulated by different aspects of categorization, alpha event-related desynchronization by the difficulty of the task, whereas the magnitude of theta-related synchronization by the identity and possibly the strength of category membership. Thus, neural signals commonly related to categorization are appropriate for tracking both the dynamic emergence of internal representation of categories, and different meaningful aspects of the categorization process.

What is the link between eye movements and sensory learning? Although some theories have argued for an automatic interaction between what we know and where we look that continuously modulates human information gathering behavior during both implicit and explicit learning, there exists limited experimental evidence supporting such an ongoing interplay.

To address this issue, we used a visual statistical learning paradigm combined with a gaze contingent stimulus presentation and manipulated the explicitness of the task to explore how learning and eye movements interact. During both implicit exploration and explicit visual learning of unknown composite visual scenes, spatial eye movement patterns systematically and gradually changed in accordance with the underlying statistical structure of the scenes. Moreover, the degree of change was directly correlated with the amount and type of knowledge the observers acquired. Our results provide the first evidence for an ongoing and specific bidirectional interaction between hitherto accumulated knowledge and eye movements during both implicit and explicit visual statistical learning, in line with theoretical accounts of active learning.

2023

2022

Statistical learning, the ability of the human brain to uncover patterns organized according to probabilistic relationships between elements and events of the environment, is a powerful learning mechanism underlying many cognitive processes. Here we examined how memory for statistical learning of probabilistic spatial configurations is impacted by interference at the time of initial exposure and varying degrees of wakefulness and sleep during subsequent offline processing. We manipulated levels of interference at learning by varying the time between exposures of different spatial configurations.

During the subsequent offline period, participants either remained awake (active wake or quiet wake) or took a nap comprised of either non-rapid eye movement (NREM) sleep only or NREM and rapid eye movement (REM) sleep. Recognition of the trained spatial configurations, as well as a novel configuration exposed after the offline period, was tested approximately 6–7 h after initial exposure. We found that the sleep conditions did not provide any additional memory benefit compared to wakefulness for spatial statistical learning with low interference. For high interference, we found some evidence that memory may be impaired following quiet wake and NREM sleep only, but not active wake or combined NREM and REM sleep. These results indicate that learning conditions may interact with offline brain states to influence the long-term retention of spatial statistical learning.

Vision and learning have long been considered to be two areas of research linked only distantly. However, recent developments in vision research have changed the conceptual definition of vision from a signal-evaluating process to a goal-oriented interpreting process, and this shift binds learning, together with the resulting internal representations, intimately to vision. In this review, we consider various types of learning (perceptual, statistical, and rule/abstract) associated with vision in the past decades and argue that they represent differently specialized versions of the fundamental learning process, which must be captured in its entirety when applied to complex visual processes.

We show why the generalized version of statistical learning can provide the appropriate setup for such a unified treatment of learning in vision, what computational framework best accommodates this kind of statistical learning, and what plausible neural scheme could feasibly implement this framework. Finally, we list the challenges that the field of statistical learning faces in fulfilling the promise of being the right vehicle for advancing our understanding of vision in its entirety.

Sequential activity reflecting previously experienced temporal sequences is considered a hallmark of learning across cortical areas. However, it is unknown how cortical circuits avoid the converse problem: producing spurious sequences that are not reflecting sequences in their inputs. We develop methods to quantify and study sequentiality in neural responses. We show that recurrent circuit responses generally include spurious sequences, which are specifically prevented in circuits that obey two widely known features of cortical microcircuit organization: Dale’s law and Hebbian connectivity. In particular, spike-timing-dependent plasticity in excitation-inhibition networks leads to an adaptive erasure of spurious sequences.

We tested our theory in multielectrode recordings from the visual cortex of awake ferrets. Although responses to natural stimuli were largely non-sequential, responses to artificial stimuli initially included spurious sequences, which diminished over extended exposure. These results reveal an unexpected role for Hebbian experience-dependent plasticity and Dale’s law in sensory cortical circuits.

2021

Perception is often described as probabilistic inference requiring an internal representation of uncertainty. However, it is unknown whether uncertainty is represented in a task-dependent manner, solely at the level of decisions, or in a fully Bayesian manner, across the entire perceptual pathway.

To address this question, we first codify and evaluate the possible strategies the brain might use to represent uncertainty, and highlight the normative advantages of fully Bayesian representations. In such representations, uncertainty information is explicitly represented at all stages of processing, including early sensory areas, allowing for flexible and efficient computations in a wide variety of situations. Next, we critically review neural and behavioral evidence about the representation of uncertainty in the brain agreeing with fully Bayesian representations. We argue that sufficient behavioral evidence for fully Bayesian representations is lacking and suggest experimental approaches for demonstrating the existence of multivariate posterior distributions along the perceptual pathway.

Uncertainty—How It Makes Science Advance

Uncertainty—How It Makes Science Advance

K Kampourakis & K McCain . (2020). Uncertainty—How It Makes Science Advance. Oxford, UK: Oxford University Press. 264 pp. £19.99 (hardback), ISBN 978–0–19–087166–6.

Reviewed by:

József Fiser, Department of Cognitive Science Central European University Vienna, Austria

Although objects are the fundamental units of our representation interpreting the environment around us, it is still not clear how we handle and organize the incoming sensory information to form object representations. By utilizing previously well-documented advantages of within-object over across-object information processing, here we test whether learning involuntarily consistent visual statistical properties of stimuli that are free of any traditional segmentation cues might be sufficient to create object-like behavioral effects.

Using a visual statistical learning paradigm and measuring efficiency of 3-AFC search and object-based attention, we find that statistically defined and implicitly learned visual chunks bias observers’ behavior in subsequent search tasks the same way as objects defined by visual boundaries do. These results suggest that learning consistent statistical contingencies based on the sensory input contributes to the emergence of object representations.

2020

The ability of developing complex internal representations of the environment is considered a crucial antecedent to the emergence of humans’ higher cognitive functions. Yet it is an open question whether there is any fundamental difference in how humans and other good visual learner species naturally encode aspects of novel visual scenes. Using the same modified visual statistical learning paradigm and multielement stimuli, we investigated how human adults and honey bees (Apis mellifera) encode spontaneously, without dedicated training, various statistical properties of novel visual scenes.

We found that, similarly to humans, honey bees automatically develop a complex internal representation of their visual environment that evolves with accumulation of new evidence even without a targeted reinforcement. In particular, with more experience, they shift from being sensitive to statistics of only elemental features of the scenes to relying on co-occurrence frequencies of elements while losing their sensitivity to elemental frequencies, but they never encode automatically the predictivity of elements. In contrast, humans involuntarily develop an internal representation that includes single-element and co-occurrence statistics, as well as information about the predictivity between elements. Importantly, capturing human visual learning results requires a probabilistic chunk-learning model, whereas a simple fragment-based memory-trace model that counts occurrence summary statistics is sufficient to replicate honey bees’ learning behavior. Thus, humans’ sophisticated encoding of sensory stimuli that provides intrinsic sensitivity to predictive information might be one of the fundamental prerequisites of developing higher cognitive abilities.

What is the link between eye movements and sensory learning? Although some theories have argued for a permanent and automatic interaction between what we know and where we look, which continuously modulates human information- gathering behavior during both implicit and explicit learning, there exist surprisingly little evidence supporting such an ongoing interaction.

We used a pure form of implicit learning called visual statistical learning and manipulated the explicitness of the task to explore how learning and eye movements interact. During both implicit exploration and explicit visual learning of unknown composite visual scenes, eye movement patterns systematically changed in accordance with the underlying statistical structure of the scenes. Moreover, the degree of change was directly correlated with the amount of knowledge the observers acquired. Our results provide the first evidence for an ongoing and specific interaction between hitherto accumulated knowledge and eye movements during both implicit and explicit learning.

2019

Orientation and position of small image segments are considered to be two fundamental low-level attributes in early visual processing, yet their encoding in complex natural stimuli is underexplored. By measuring the just-noticeable differences in noise perturbation, we investigated how orientation and position information of a large number of local elements (Gabors) were encoded separately or jointly.  Importantly, the Gabors composed various classes of naturalistic stimuli that were equated by all low-level attributes and differed only in their higher-order configural complexity and familiarity. Although unable to consciously tell apart the type of perturbation, observers detected orientation and position noise significantly differently. Furthermore, when the Gabors were perturbed by both types of noise simultaneously, performance adhered to a reliability-based optimal probabilistic combination of individual attribute noises. Our results suggest that orientation and position are independently coded and probabilistically combined for naturalistic stimuli at the earliest stage of visual processing.

Importantly, the Gabors composed various classes of naturalistic stimuli that were equated by all low-level attributes and differed only in their higher-order configural complexity and familiarity. Although unable to consciously tell apart the type of perturbation, observers detected orientation and position noise significantly differently. Furthermore, when the Gabors were perturbed by both types of noise simultaneously, performance adhered to a reliability-based optimal probabilistic combination of individual attribute noises. Our results suggest that orientation and position are independently coded and probabilistically combined for naturalistic stimuli at the earliest stage of visual processing.

We investigated the origin of two previously reported general rules of perceptual learning. First, the initial discrimination thresholds and the amount of learning were found to be related through a Weber-like law. Second, increased training length negatively influenced the observer’s ability to generalize the obtained knowledge to a new context. Using a five-day training protocol, separate groups of observers were trained to perform discrimination around two different reference values of either contrast (73% and 30%) or orientation (25° and 0°). In line with previous research, we found a Weber-like law between initial performance and the amount of learning, regardless of whether the tested attribute was contrast or orientation.

However, we also showed that this relationship directly reflected observers’ perceptual scaling function relating physical intensities to perceptual magnitudes, suggesting that participants learned equally on their internal perceptual space in all conditions. In addition, we found that with the typical five-day training period, the extent of generalization was proportional to the amount of learning, seemingly contradicting the previously reported diminishing generalization with practice. This result suggests that the negative link between generalization and the length of training found in earlier studies might have been due to overfitting after longer training and not directly due to the amount of learning per se.

The concept of objects is fundamental to cognition and is defined by a consistent set of sensory properties and physical affordances. Although it is unknown how the abstract concept of an object emerges, most accounts assume that visual or haptic boundaries are crucial in this process. Here, we tested an alternative hypothesis that boundaries are not essential but simply reflect a more fundamental principle: consistent visual or haptic statistical properties.

Using a novel visuo-haptic statistical learning paradigm, we familiarised participants with objects defined solely by across-scene statistics provided either visually or through physical interactions. We then tested them on both a visual familiarity and a haptic pulling task, thus measuring both within-modality learning and across-modality generalisation. Participants showed strong within-modality learning and ‘zero-shot’ across-modality generalisation which were highly correlated. Our results demonstrate that humans can segment scenes into objects, without any explicit boundary cues, using purely statistical information.

System-level learning of sensory information is traditionally divided into two domains: perceptual learning that focuses on acquiring knowledge suitable for fine discrimination between similar sensory inputs, and statistical learning that explores the mechanisms that develop complex representations of unfamiliar sensory experiences. The two domains have been typically treated in complete separation both in terms of the underlying computational mechanisms and the brain areas and processes implementing those computations.

However, a number of recent findings in both domains call in question this strict separation. We interpret classical and more recent results in the general framework of probabilistic computation, provide a unifying view of how various aspects of the two domains are interlinked, and suggest how the probabilistic approach can also alleviate the problem of dealing with widely different types of neural correlates of learning. Finally, we outline several directions along which our proposed approach fosters new types of experiments that can promote investigations of natural learning in humans and other species.

2018

Many sensory neural circuits exhibit response normalization, which occurs when the response of a neuron to a combination of multiple stimuli is less than the sum of the responses to the individual stimuli presented alone. In the visual cortex, normalization takes the forms of cross-orientation suppression and surround suppression. At the onset of visual experience, visual circuits are partially developed and exhibit some mature features such as orientation selectivity, but it is unknown whether cross-orientation suppression is present at the onset of visual experience or requires visual experience for its emergence.

We characterized the development of normalization and its dependence on visual experience in female ferrets. Visual experience was varied across three conditions: typical rearing, dark rearing, and dark rearing with daily exposure to simple sinusoidal gratings (14-16 hours total). Cross-orientation suppression and surround suppression were noted in the earliest observations, and did not vary considerably with experience. We also observed evidence of continued maturation of receptive field properties in the second month of visual experience: substantial length summation was observed only in the oldest animals (postnatal day 90); evoked firing rates were greatly increased in older animals; and direction selectivity required experience, but declined slightly in older animals. These results constrain the space of possible circuit implementations of these features.

Effective communication crucially depends on the ability to produce and recognize structured signals, as apparent in language and birdsong. Although it is not clear to what extent similar syntactic-like abilities can be identified in other animals, recently we reported that domesticchicks can learn abstract visual patterns and the statistical structure defined by a temporal sequence of visual shapes. However, little is known about chicks’ ability to process spatial/positional information from visual configurations.

Here, we used filial imprinting as an unsupervised learning mechanism to study spontaneous encoding of the structure of a configuration of different shapes. After being exposed to a triplet of shapes (ABC or CAB), chicks could discriminate those triplets from a permutation of the same shapes in different order (CAB or ABC), revealing a sensitivity to the spatial arrangement of the elements. When tested with a fragment taken from the imprinting triplet that followed the familiar adjacency-relationships (AB or BC) vs. one in which the shapes maintained their position with respect to the stimulus edges (AC), chicks revealed a preference for the configuration with familiar edge elements, showing an edge bias previously found only with temporal sequences.

In principle, the development of sensory receptive fields in cortex could arise from experience-independent mechanisms that have been acquired through evolution, or through an online analysis of the sensory experience of the individual animal.

Here we review recent experiments that suggest that the development of direction selectivity in carnivore visual cortex requires experience, but also suggest that the experience of an individual animal cannot greatly influence the parameters of the direction tuning that emerges, including direction angle preference and speed tuning. The direction angle preference that a neuron will acquire can be predicted from small initial biases that are present in the naïve cortex prior to the onset of visual experience. Further, experience with stimuli that move at slow or fast speeds does not alter the speed tuning properties of direction-selective neurons, suggesting that speed tuning preferences are built in. Finally, unpatterned optogenetic activation of the cortex over a period of a few hours is sufficient to produce the rapid emergence of direction selectivity in the naïve ferret cortex, suggesting that information about the direction angle preference that cells will acquire must already be present in the cortical circuit prior to experience. These results are consistent with the idea that experience has a permissive influence on the development of direction selectivity.

2017

Behavioral evidence has shown that humans automatically develop internal representations adapted to the temporal and spatial statistics of the environment. Building on prior fMRI studies that have focused on statistical learning of temporal sequences, we investigated the neural substrates and mechanisms underlying statistical learning from scenes with a structured spatial layout. Our goals were twofold: (1) to determine discrete brain regions in which degree of learning (i.e., behavioral performance) was a significant predictor of neural activity during acquisition of spatial regularities and (2) to examine how connectivity between this set of areas and the rest of the brain changed over the course of learning. Univariate activity analyses indicated a diffuse set of dorsal striatal and occipitoparietal activations correlated with individual differences in participants’ ability to acquire the underlying spatial structure of the scenes. In addition, bilateral medial-temporal activation was linked to participants’ behavioral performance, suggesting that spatial statistical learning recruits additional resources from the limbic system. Connectivity analyses examined, across the time course of learning, psychophysiological interactions with peak regions defined by the initial univariate analysis. Generally, we find that task-based connectivity with these regions was significantly greater in early relative to later periods of learning. Moreover, in certain cases, decreased task-based connectivity between time points was predicted by overall posttest performance. Results suggest a narrowing mechanism whereby the brain, confronted with a novel structured environment, initially boosts overall functional integration and then reduces interregional coupling over time.

Behavioral evidence has shown that humans automatically develop internal representations adapted to the temporal and spatial statistics of the environment. Building on prior fMRI studies that have focused on statistical learning of temporal sequences, we investigated the neural substrates and mechanisms underlying statistical learning from scenes with a structured spatial layout. Our goals were twofold: (1) to determine discrete brain regions in which degree of learning (i.e., behavioral performance) was a significant predictor of neural activity during acquisition of spatial regularities and (2) to examine how connectivity between this set of areas and the rest of the brain changed over the course of learning. Univariate activity analyses indicated a diffuse set of dorsal striatal and occipitoparietal activations correlated with individual differences in participants’ ability to acquire the underlying spatial structure of the scenes. In addition, bilateral medial-temporal activation was linked to participants’ behavioral performance, suggesting that spatial statistical learning recruits additional resources from the limbic system. Connectivity analyses examined, across the time course of learning, psychophysiological interactions with peak regions defined by the initial univariate analysis. Generally, we find that task-based connectivity with these regions was significantly greater in early relative to later periods of learning. Moreover, in certain cases, decreased task-based connectivity between time points was predicted by overall posttest performance. Results suggest a narrowing mechanism whereby the brain, confronted with a novel structured environment, initially boosts overall functional integration and then reduces interregional coupling over time.

2016

Many circuits in the mammalian brain are organized in a topographic or columnar manner. These circuits could be activated-in ways that reveal circuit function or restore function after disease-by an artificial stimulation system that is capable of independently driving local groups of neurons. Here we present a simple custom microscope called ProjectorScope 1 that incorporates off-the-shelf parts and a liquid crystal display (LCD) projector to stimulate surface brain regions that express channelrhodopsin-2 (ChR2).

In principle, local optogenetic stimulation of the brain surface with optical projection systems might not produce local activation of a highly interconnected network like the cortex, because of potential stimulation of axons of passage or extended dendritic trees. However, here we demonstrate that the combination of virally mediated ChR2 expression levels and the light intensity of ProjectorScope 1 is capable of producing local spatial activation with a resolution of ∼200-300 μm. We use the system to examine the role of cortical activity in the experience-dependent emergence of motion selectivity in immature ferret visual cortex. We find that optogenetic cortical activation alone-without visual stimulation-is sufficient to produce increases in motion selectivity, suggesting the presence of a sharpening mechanism that does not require precise spatiotemporal activation of the visual system. These results demonstrate that optogenetic stimulation can sculpt the developing brain.

Neural responses in the visual cortex are variable, and there is now an abundance of data characterizing how the magnitude and structure of this ariability depends on the stimulus. Current theories of cortical computation fail to account for these data; they either ignore variability altogether or only model its unstructured Poisson-like aspects.

We develop a theory in which the cortex performs probabilistic inference such that population activity patterns represent statistical samples from the inferred probability distribution. Our main prediction is that perceptual uncertainty is directly encoded by the variability, rather than the average, of cortical responses. Through direct comparisons to previously published data as well as original data analyses, we show that a sampling-based probabilistic representation accounts for the structure of noise, signal, and spontaneous response variability and correlations in the primary visual cortex. These results suggest a novel role for neural variability in cortical dynamics and computations.

We address two main challenges facing systems neuroscience today: understanding the nature and function of cortical feedback between sensory areas and of correlated variability. Starting from the old idea of perception as probabilistic inference, we show how to use knowledge of the physical task to make testable predictions for the influence of feedback signals on early sensory representations.

Applying our framework to a two-alternative forced choice task paradigm, we can explain multiple empirical findings that have been hard to account for by the traditional feedforward model of sensory processing, including the task dependence of neural response correlations and the diverging time courses of choice probabilities and psychophysical kernels. Our model makes new predictions and characterizes a component of correlated variability that represents task-related information rather than performance-degrading noise. It demonstrates a normative way to integrate sensory and cognitive components into physiologically testable models of perceptual decision-making.

2015

Objective: Individuals with autism spectrum disorder (ASD) are often characterized as having social engagement and language deficiencies, but a sparing of visuospatial processing and short-term memory (STM), with some evidence of supranormal levels of performance in these domains. The present study expanded on this evidence by investigating the observational learning of visuospatial concepts from patterns of covariation across multiple exemplars. Method: Child and adult participants with ASD, and age-matched control participants, viewed multishape arrays composed from a random combination of pairs of shapes that were each positioned in a fixed spatial arrangement.

Results: After this passive exposure phase, a posttest revealed that all participant groups could discriminate pairs of shapes with high covariation from randomly paired shapes with low covariation. Moreover, learning these shape-pairs with high covariation was superior in adults with ASD than in age-matched controls, whereas performance in children with ASD was no different than controls. Conclusions: These results extend previous obser- vations of visuospatial enhancement in ASD into the domain of learning, and suggest that enhanced visual statistical learning may have arisen from a sustained bias to attend to local details in complex arrays of visual features.

Although orientation coding in the human visual system has been researched with simple stimuli, little is known about how orientation information is represented while viewing complex images. We show that, similar to findings with simple Gabor textures, the visual system involuntarily discounts orientation noise in a wide range of natural images, and that this discounting produces a dipper function in the sensitivity to orientation noise, with best sensitivity at intermediate levels of pedestal noise.

However, the level of this discounting depends on the complexity and familiarity of the input image, resulting in an image-class-specific threshold that changes the shape and position of the dipper function according to image class. These findings do not fit a filter-based feed-forward view of orientation coding, but can be explained by a process that utilizes an experience-based perceptual prior of the expected local orientations and their noise. Thus, the visual system encodes orientation in a dynamic context by continuously combining sensory information with expectations derived from earlier experiences.

2013

It has been reported recently that while general sequence learning across ages conforms to the typical inverted-U shape pattern, with best performance in early adulthood, surprisingly, the basic ability of picking up in an implicit manner triplets that occur with high vs. low probability in the sequence is best before 12 years of age and it significantly weakens afterwards. Based on these findings, it has been hypothesized that the cognitively controlled processes coming online at around 12 are useful for more targeted explicit learning at the cost of becoming relatively less sensitive to raw probabilities of events.

To test this hypothesis, we collected data in a sequence learning task using probabilistic sequences in five age groups from 11 to 39 years of age (N = 288), replicating the original implicit learning paradigm in an explicit task setting where subjects were guided to find repeating sequences. We found that in contrast to the implicit results, performance with the high- vs. low-probability triplets was at the same level in all age groups when subjects sought patterns in the sequence explicitly. Importantly, measurements of explicit knowledge about the identity of the sequences revealed a significant increase in ability to explicitly access the true sequences exactly around the age where the earlier study found the significant drop in ability to learn implicitly raw probabilities. These findings support the conjecture that the gradually increasing involvement of more complex internal models optimizes our skill learning abilities by compensating for the performance loss due to down-weighting the raw probabilities of the sensory input, while expanding our ability to acquire more sophisticated skills.

A comment on `Population rate dynamics and multineuron firing patterns in sensory cortex’ by Okun et al. Journal of Neuroscience 32(48):17108-17119, 2012 and our response to the corresponding reply by Okun et al’s (arXiv, 2013).

2012

Implicit skill learning underlies obtaining not only motor, but also cognitive and social skills through the life of an individual. Yet, the ontogenetic changes in humans’ implicit learning abilities have not yet been characterized, and, thus, their role in acquiring new knowledge efficiently during development is unknown.

We investigated such learning across the lifespan, between 4 and 85 years of age with an implicit probabilistic sequence learning task, and we found that the difference in implicitly learning high- vs. low-probability events – measured by raw reaction time (RT) – exhibited a rapid decrement around age of 12. Accuracy and z-transformed data showed partially different developmental curves, suggesting a re-evaluation of analysis methods in developmental research. The decrement in raw RT differences supports an extension of the traditional two-stage lifespan skill acquisition model: in addition to a decline above the age 60 reported in earlier studies, sensitivity to raw probabilities and, therefore, acquiring new skills is significantly more effective until early adolescence than later in life. These results suggest that due to developmental changes in early adolescence, implicit skill learning processes undergo a marked shift in weighting raw probabilities vs. more complex interpretations of events, which, with appropriate timing, prove to be an optimal strategy for human skill learning.

In performing search tasks, the visual system encodes information across the visual field at a resolution inversely related to eccentricity and deploys saccades to place visually interesting targets upon the fovea, where resolution is highest. The serial process of fixation, punctuated by saccadic eye movements, continues until the desired target has been located. Loss of central vision restricts the ability to resolve the high spatial information of a target, interfering with this visual search process.

We investigate oculomotor adaptations to central visual field loss with gaze-contingent artificial scotomas. Methods. Spatial distortions were placed at random locations in 25° square natural scenes. Gaze-contingent artificial central scotomas were updated at the screen rate (75 Hz) based on a 250 Hz eye tracker. Eight subjects searched the natural scene for the spatial distortion and indicated its location using a mouse-controlled cursor. Results. As the central scotoma size increased, the mean search time increased [F(3,28) = 5.27, p = 0.05], and the spatial distribution of gaze points during fixation increased significantly along the x [F(3,28) = 6.33, p = 0.002] and y [F(3,28) = 3.32, p = 0.034] axes. Oculomotor patterns of fixation duration, saccade size, and saccade duration did not change significantly, regardless of scotoma size. In conclusion, there is limited automatic adaptation of the oculomotor system after simulated central vision loss.

Internally generated, spontaneous activity is ubiquitous in the cortex, yet it does not appear to have a significant negative impact on sensory processing. Various studies have found that stimulus onset reduces the variability of cortical responses, but the characteristics of this sup- pression remained unexplored. By recording multiunit activity from awake and anesthetized rats, we investigated whether and how this noise suppression depends on properties of the stimulus and on the state of the cortex.

In agreement with theoretical predictions, we found that the degree of noise suppression in awake rats has a nonmonotonic dependence on the temporal frequency of a flickering visual stimulus with an optimal frequency for noise suppression ~2 Hz. This effect cannot be explained by features of the power spectrum of the spontaneous neural activity. The nonmonotonic frequency dependence of the suppression of variability gradually disappears under increasing levels of anesthesia and shifts to a monotonic pattern of increasing suppression with decreasing frequency. Signal-to-noise ratios show a similar, although inverted, dependence on cortical state and frequency. These results suggest the existence of an active noise suppression mechanism in the awake cortical system that is tuned to support signal propagation and coding.

2011

The brain maintains internal models of its environment to interpret sensory inputs and to prepare actions. Although behavioral studies have demonstrated that these internal models are optimally adapted to the statistics of the environment, the neural underpinning of this adaptation is unknown.

Using a Bayesian model of sensory cortical processing, we related stimulus-evoked and spontaneous neural activities to inferences and prior expectations in an internal model and predicted that they should match if the model is statistically optimal. To test this prediction, we analyzed visual cortical activity of awake ferrets during development. Similarity between spontaneous and evoked activities increased with age and was specific to responses evoked by natural scenes. This demonstrates the progressive adaptation of internal models to the statistics of natural stimuli at the neural level.

Several studies report a right hemisphere (RH) advantage for visuo-spatial integration and a left hemisphere (LH) advantage for inferring conceptual knowledge from patterns of covariation. The present study examined hemispheric asymmetry in the implicit learning of new visual-feature combinations. A split-brain patient and normal control participants viewed multi-shape scenes presented in either the right or left visual fields. Unbeknownst to the participants the scenes were composed from a random combination of fixed pairs of shapes. Subsequent testing found that control participants could discriminate fixed-pair shapes from randomly combined shapes when presented in either visual field. The split-brain patient performed at chance except when both the practice and test displays were presented in the left visual field (RH). These results suggest that the statistical learning of new visual features is dominated by visuospatial processing in the right hemisphere and provide a prediction about how fMRI activation patterns might change during unsupervised statistical learning.

Several studies report a right hemisphere (RH) advantage for visuo-spatial integration and a left hemisphere (LH) advantage for inferring conceptual knowledge from patterns of covariation. The present study examined hemispheric asymmetry in the implicit learning of new visual-feature combinations. A split-brain patient and normal control participants viewed multi-shape scenes presented in either the right or left visual fields. Unbeknownst to the participants the scenes were composed from a random combination of fixed pairs of shapes. Subsequent testing found that control participants could discriminate fixed-pair shapes from randomly combined shapes when presented in either visual field. The split-brain patient performed at chance except when both the practice and test displays were presented in the left visual field (RH). These results suggest that the statistical learning of new visual features is dominated by visuospatial processing in the right hemisphere and provide a prediction about how fMRI activation patterns might change during unsupervised statistical learning.

2010

Human perception has recently been characterized as statistical inference based on noisy and ambiguous sensory inputs. Moreover, suitable neural representations of uncertainty have been identified that could underlie such probabilistic computations. In this review, we argue that learning an internal model of the sensory environment is another key aspect of the same statistical inference procedure and thus perception and learning need to be treated jointly.

We review evidence for statistically optimal learning in humans and animals, and re-evaluate possible neural representations of uncertainty based on their potential to support statistically optimal learning. We propose that spontaneous activity can have a functional role in such representations leading to a new, sampling-based, framework of how the cortex represents information and uncertainty.

2009

Traditionally, perceptual learning in humans and classical conditioning in animals have been considered as two very different research areas, with separate problems, paradigms, and explanations. However, a number of themes common to these fields of research emerge when they are approached from the more general concept of representational learning. To demonstrate this, I present results of several learning experiments with human adults and infants, exploring how internal representations of complex unknown visual patterns might emerge in the brain.

I provide evidence that this learning cannot be captured fully by any simple pairwise associative learning scheme, but rather by a probabilistic inference process called Bayesian model averaging, in which the brain is assumed to formulate the most likely chunking/grouping of its previous experience into independent representational units. Such a generative model attempts to represent the entire world of stimuli with optimal ability to generalize to likely scenes in the future. I review the evidence showing that a similar philosophy and generative scheme of representation has successfully described a wide range of experimental data in the domain of classical conditioning in animals. These convergent findings suggest that statistical theories of representational learning might help to link human perceptual learning and animal classical conditioning results into a coherent framework.

In the present review we discuss an extension of classical perceptual learning called the observational learning paradigm. We propose that studying the process how humans develop internal representation of their environment requires modifications of the original perceptual learning paradigm which lead to observational learning.

We relate observational learning to other types of learning, mention some recent developments that enabled its emergence, and summarize the main empirical and modeling findings that observational learning studies obtained. We conclude by suggesting that observational learning studies have the potential of providing a unified framework to merge human statistical learning, chunk learning and rule learning.

The visual system must learn to infer the presence of objects and features in the world from the images it encounters, and as such it must, either implicitly or explicitly, model the way these elements interact to create the image. Do the response properties of cells in the mammalian visual system reflect this constraint? To address this question, we constructed a probabilistic model in which the identity and attributes of simple visual elements were represented explicitly and learnt the parameters of this model from unparsed, natural video sequences.

After learning, the behaviour and grouping of variables in the probabilistic model corresponded closely to functional and anatomical properties of simple and complex cells in the primary visual cortex (V1). In particular, feature identity variables were activated in a way that resembled the activity of complex cells, while feature attribute variables responded much like simple cells. Furthermore, the grouping of the attributes within the model closely parallelled the reported anatomical grouping of simple cells in cat V1. Thus, this generative model makes explicit an interpretation of complex and simple cells as elements in the segmentation of a visual scene into basic independent features, along with a parametrisation of their moment-by-moment appearances. We speculate that such a segmentation may form the initial stage of a hierarchical system that progressively separates the identity and appearance of more articulated visual elements, culminating in view-invariant object recognition.

Modular toolkit for Data Processing (MDP) is a data processing framework written in Python. From the user’s perspective, MDP is a collection of supervised and unsupervised learning algorithms and other data processing units that can be combined into data processing sequences and more complex feed-forward network architectures. Computations are performed efficiently in terms of speed and memory requirements. From the scientific developer’s perspective, MDP is a modular framework, which can easily be expanded.

The implementation of new algorithms is easy and intuitive. The new implemented units are then automatically integrated with the rest of the library. MDP has been written in the context of theoretical research in neuroscience, but it has been designed to be helpful in any context where trainable data processing algorithms are used. Its simplicity on the user’s side, the variety of readily available algorithms, and the reusability of the implemented units make it also a useful educational tool.

2008

Efficient and versatile processing of any hierarchically structured information requires a learning mechanism that combines lower-level features into higher-level chunks. We investigated this chunking mechanism in humans with a visual pattern-learning paradigm. We developed an ideal learner based on Bayesian model comparison that extracts and stores only those chunks of information that are minimally sufficient to encode a set of visual scenes.

Our ideal Bayesian chunk learner not only reproduced the results of a large set of previous empirical findings in the domain of human pattern learning but also made a key prediction that we confirmed experimentally. In accordance with Bayesian learning but contrary to associative learning, human performance was well above chance when pair-wise statistics in the exemplars contained no relevant information. Thus, humans extract chunks from complex visual patterns by generating accurate yet economical representations and not by encoding the full correlational structure of the input.

2007

Visual statistical learning of shape sequences was examined in the context of occluded object trajectories. In a learning phase, participants viewed a sequence of moving shapes whose trajectories and speed profiles elicited either a bouncing or a streaming percept: The sequences consisted of a shape moving toward and then passing behind an occluder, after which two different shapes emerged from behind the occluder. At issue was whether statistical learning linked both object transitions equally, or whether the percept of either bouncing or streaming constrained the association between pre- and postocclusion objects.

In familiarity judgments following the learning, participants reliably selected the shape pair that conformed to the bouncing or streaming bias that was present during the learning phase. A follow-up experiment demonstrated that differential eye movements could not account for this finding. These results suggest that sequential statistical learning is constrained by the spatiotemporal perceptual biases that bind two shapes moving through occlusion, and that this constraint thus reduces the computational complexity of visual statistical learning.

2005

Studies of cognitive development in human infants have relied almost entirely on descriptive data at the behavioral level – the age at which a particular ability emerges. The underlying mechanisms of cognitive development remain largely unknown, despite attempts to correlate behavioral states with brain states.

We argue that research on cognitive development must focus on theories of learning, and that these theories must reveal both the computational principles and the set of constraints that underlie developmental change. We discuss four specific issues in infant learning that gain renewed importance in light of this opinion.

The authors investigated how human adults encode and remember parts of multielement scenes composed of recursively embedded visual shape combinations. The authors found that shape combinations that are parts of larger configurations are less well remembered than shape combinations of the same kind that are not embedded.

Combined with basic echanisms of statistical learning, this embeddedness constraint enables the development of complex new features for acquiring internal representations efficiently without being computationally intractable. The resulting representations also encode parts and wholes by chunking the visual input into components according to the statistical coherence of their constituents. These results suggest that a bootstrapping approach of constrained statistical learning offers a unified framework for investigating the formation of different internal representations in pattern and scene perception.

2004

During vision, it is believed that neural activity in the primary visual cortex is predominantly driven by sensory input from the environment. However, visual cortical neurons respond to repeated presentations of the same stimulus with a high degree of variability. Although this variability has been considered to be noise owing to random spontaneous activity within the cortex, recent studies show that spontaneous activity has a highly coherent spatio-temporal structure. This raises the possibility that the pattern of this spontaneous activity may shape neural responses during natural viewing conditions to a larger extent than previously thought.

Here, we examine the relationship between spontaneous activity and the response of primary visual cortical neurons to dynamic natural-scene and random-noise film images in awake, freely viewing ferrets from the time of eye opening to maturity. The correspondence between evoked neural activity and the structure of the input signal was weak in young animals, but systematically improved with age. This improvement was linked to a shift in the dynamics of spontaneous activity. At all ages including the mature animal, correlations in spontaneous neural firing were only slightly modified by visual stimulation, irrespective of the sensory input. These results suggest that in both the developing and mature visual cortex, sensory evoked neural activity represents the modulation and triggering of ongoing circuit dynamics by input signals, rather than directly reflecting the structure of the input signal itself.

2003

Visual experience, which is defined by brief saccadic sampling of complex scenes at high contrast, has typically been studied with static gratings at threshold contrast. To investigate how suprathreshold visual processing is related to threshold vision, we tested the temporal integration of contrast in the presence of large, sudden changes in the stimuli such occur during saccades under natural conditions.

We observed completely different effects under threshold and suprathreshold viewing conditions. The threshold contrast of successively presented gratings that were either perpendicularly oriented or of inverted phase showed probability summation, implying no detectable interaction between independent visual detectors. However, at suprathreshold levels we found complete algebraic summation of contrast for stimuli longer than 53 ms. The same results were obtained during sudden changes between random noise patterns and between natural scenes. These results cannot be explained by traditional contrast gain-control mechanisms or the effect of contrast constancy. Rather, at suprathreshold levels, the visual system seems to conserve the contrast information from recently viewed images, perhaps for the efficient assessment of the contrast of the visual scene while the eye saccades from place to place.

Natural scene coding in ferret visual cortex was investigated using a new technique for multi-site recording of neuronal activity from the cortical surface. Surface recordings accurately reflected radially aligned layer 2/3 activity. At individual sites, evoked activity to natural scenes was weakly correlated with the local image contrast structure falling within the cells’ classical receptive field. However, a population code, derived from activity integrated across cortical sites having retinotopically overlapping receptive fields, correlated strongly with the local image contrast structure.

Cell responses demonstrated high lifetime sparseness, population sparseness, and high dispersal values, implying efficient neural coding in terms of information processing. These results indicate that while cells at an individual cortical site do not provide a reliable estimate of the local contrast structure in natural scenes, cell activity integrated across distributed cortical sites is closely related to this structure in the form of a sparse and dispersed code.

2002

The ability of humans to recognize a nearly unlimited number of unique visual objects must be based on a robust and efficient learning mechanism that extracts complex visual features from the environment. To determine whether statistically optimal representations of scenes are formed during early development, we used a habituation paradigm with 9-month-old infants and found that, by mere observation of multielement scenes, they become sensitive to the underlying statistical structure of those scenes. After exposure to a large number of scenes, infants paid more attention not only to element pairs that cooccurred more often as embedded elements in the scenes than other pairs, but also to pairs that had higher predictability (conditional probability) between the elements of the pair. These findings suggest that, similar to lower-level visual representations, infants learn higher-order visual features based on the statistical coherence of elements within the scenes, thereby allowing them to develop an efficient representation for further associative learning.

The ability of humans to recognize a nearly unlimited number of unique visual objects must be based on a robust and efficient learning mechanism that extracts complex visual features from the environment. To determine whether statistically optimal representations of scenes are formed during early development, we used a habituation paradigm with 9-month-old infants and found that, by mere observation of multielement scenes, they become sensitive to the underlying statistical structure of those scenes. After exposure to a large number of scenes, infants paid more attention not only to element pairs that cooccurred more often as embedded elements in the scenes than other pairs, but also to pairs that had higher predictability (conditional probability) between the elements of the pair. These findings suggest that, similar to lower-level visual representations, infants learn higher-order visual features based on the statistical coherence of elements within the scenes, thereby allowing them to develop an efficient representation for further associative learning.

In 3 experiments, the authors investigated the ability of observers to extract the probabilities of successive shape co-occurrences during passive viewing. Participants became sensitive to several temporal-order statistics, both rapidly and with no overt task or explicit instructions. Sequences of shapes presented during familiarization were distinguished from novel sequences of familiar shapes, as well as from shape sequences that were seen during familiarization but less frequently than other shape sequences, demonstrating at least the extraction of joint probabilities of 2 consecutive shapes.

When joint probabilities did not differ, another higher-order statistic (conditional probability) was automatically computed, thereby allowing participants to predict the temporal order of shapes. Results of a single-shape test documented that lower-order statistics were retained during the extraction of higher-order statistics. These results suggest that observers automatically extract multiple statistics of temporal events that are suitable for efficient associative learning of new temporal features.

2001

Three experiments investigated the ability of human observers to extract the joint and conditional probabilities of shape co-occurrences during passive viewing of complex visual scenes. Results indicated that statistical learning of shape conjunctions was both rapid and automatic, as subjects were not instructed to attend to any particular features of the displays. Moreover, in addition to single-shape frequency, subjects acquired in parallel several different higher-order aspects of the statistical structure of the displays, including absolute shape-position relations in an array, shape-pair arrangements independent of position, and conditional probabilities of shape co-occurrences. Unsupervised learning of these higher-order statistics provides support for Barlow’s theory of visual recognition, which posits that detecting “suspicious coincidences” of elements during recognition is a necessary prerequisite for efficient learning of new visual features.

Three experiments investigated the ability of human observers to extract the joint and conditional probabilities of shape co-occurrences during passive viewing of complex visual scenes. Results indicated that statistical learning of shape conjunctions was both rapid and automatic, as subjects were not instructed to attend to any particular features of the displays. Moreover, in addition to single-shape frequency, subjects acquired in parallel several different higher-order aspects of the statistical structure of the displays, including absolute shape-position relations in an array, shape-pair arrangements independent of position, and conditional probabilities of shape co-occurrences. Unsupervised learning of these higher-order statistics provides support for Barlow’s theory of visual recognition, which posits that detecting “suspicious coincidences” of elements during recognition is a necessary prerequisite for efficient learning of new visual features.

How do we attend to objects at a variety of sizes as we view our visual world? Because of an advantage in identification of lowpass over highpass filtered patterns, as well as large over small images, a number of theorists have assumed that size-independent recognition is achieved by spatial frequency (SF) based coarse-to-fine tuning. We found that the advantage of large sizes or low SFs was lost when participants attempted to identify a target object (specified verbally) somewhere in the middle of a sequence of 40 images of objects, each shown for only 72 ms, as long as the target and distractors were the same size or spatial frequency (unfiltered or low or high bandpassed).

When targets were of a different size or scale than the distractors, a marked advantage (pop out) was observed for large (unfiltered) and low SF targets against small (unfiltered) and high SF distractors, respectively, and a marked decrement for the complementary conditions. Importantly, this pattern of results for large and small images was unaffected by holding absolute or relative SF content constant over the different sizes and it could not be explained by simple luminance- or contrast-based pattern masking. These results suggest that size/scale tuning in object recognition was accomplished over the first several images (576 ms) in the sequence and that the size tuning was implemented by a mechanism sensitive to spatial extent rather than to variations in spatial frequency.

The representation of shape mediating visual object priming was investigated. In two blocks of trials, subjects named images of common objects presented for 185 ms that were bandpass filtered, either at high (10 cpd) or at low (2 cpd) center frequency with a 1.5 octave bandwidth, and positioned either 5º right or left of fixation. The second presentation of an image of a given object type could be filtered at the same or different band, be shown at the same or translated (and mirror reflected) position, and be the same exemplar as that in the first block or a same-name different-shaped exemplar (e.g. a different kind of chair).

Second block reaction times (RTs) and error rates were markedly lower than they were on the first block, which, in the context of prior results, was indicative of strong priming. A change of exemplar in the second block resulted in a significant cost in RTs and error rates, indicating that a portion of the priming was visual and not just verbal or basic-level conceptual. However, a change in the spatial frequency (SF) content of the image had no effect on priming despite the dramatic difference it made in appearance of the objects. This invariance to SF changes was also preserved with centrally presented images in a second experiment. Priming was also invariant to a change in left–right position (and mirror orientation) of the image. The invariance over translation of such a large magnitude suggests that the locus of the representation mediating the priming is beyond an area that would be homologous to posterior TEO in the monkey. We conclude that this representation is insensitive to low level image variations (e.g. SF, precise position or orientation of features) that do not alter the basic part-structure of the object. Finally, recognition performance was unaffected by whether low or high bandpassed images were presented either in the left or right visual field, giving no support to the hypothesis of hemispheric differences in processing low and high spatial frequencies.

We study the hypothesis that observers can use haptic percepts as a standard against which the relative reliabilities of visual cues can be judged, and that these reliabilities determine how observers combine depth information provided by these cues. Using a novel visuo-haptic virtual reality environment, subjects viewed and grasped virtual objects. In Experiment 1, subjects were trained under motion relevant conditions, during which haptic and visual motion cues were consistent whereas haptic and visual texture cues were uncorrelated, and texture relevant conditions, during which haptic and texture cues were consistent whereas haptic and motion cues were uncorrelated. Subjects relied more on the motion cue after motion relevant training than after texture relevant training, and more on the texture cue after texture relevant training than after motion relevant training. Experiment 2 studied whether or not subjects could adapt their visual cue combination strategies in a context-dependent manner based on context-dependent consistencies between haptic and visual cues.

Subjects successfully learned two cue combination strategies in parallel, and correctly applied each strategy in its appropriate context. Experiment 3, which was similar to Experiment 1 except that it used a more naturalistic experimental task, yielded the same pattern of results as Experiment 1 indicating that the findings do not depend on the precise nature of the experimental task. Overall, the results suggest that observers can involuntarily compare visual and haptic percepts in order to evaluate the relative reliabilities of visual cues, and that these reliabilities determine how cues are combined during three-dimensional visual perception.

2000

We have studied some of the design trade-offs governing visual representations based on spatially invariant conjunctive feature detectors, with an emphasis on the susceptibility of such systems to false-positive recognition errors — Malsburg’s classical binding problem. We begin by deriving an analytical model that makes explicit how recognition performance is affected by the number of objects that must be distinguished, the number of features included in the representation, the complexity of individual objects, and the clutter load, that is, the amount of visual material in the field of view in which multiple objects must be simultaneously recognized, independent of pose, and without explicit segmentation.

Using the domain of text to model object recognition in cluttered scenes, we show that with corrections for the nonuniform probability and nonindependence of text features, the analytical model achieves good fits to measured recognition rates in simulations involving a wide range of clutter loads, word sizes, and feature counts.We then introduce a greedy algorithm for feature learning, derived from the analytical model, which grows a representation by choosing those conjunctive features that are most likely to distinguish objects from the cluttered backgrounds in which they are embedded.We show that the representations produced by this algorithm are compact, decorrelated, and heavily weighted toward features of low conjunctive order. Our results provide a more quantitative basis for understanding when spatially invariant conjunctive features can support unambiguous perception in multiobject scenes, and lead to several insights regarding the properties of visual representations optimized for specific recognition tasks.

1999

The classication of a table as round rather than square, a car as a Mazda rather than a Ford, a drill bit as 3/8-inch rather than 1/4-inch, and a face as Tom have all been regarded as a single process termed “subordinate classification”. Despite the common label, the considerable heterogeneity of the perceptual processing required to achieve such classifications requires, minimally, a more detailed taxonomy. Perceptual information relevant to subordinate-level shape classications can be presumed to vary on continua of (a) the type of distinctive information that is present, nonaccidental or metric, (b) the size of the relevant contours or surfaces, and (c) the similarity of the to-be-discriminated features, such as whether a straight contour has to be distinguished from a contour of low curvature versus high curvature.

We consider three, relatively pure cases. Case 1 subordinates may be distinguished by a representation, a geon structural description (GSD), specify ing a nonaccidental characterization of an object’s large parts and the relations among these parts, such as a round table versus a square table. Case 2 subordinates are also distinguished by GSDs, except that the distinctive GSDs are present at a small scale in a complex object so the location and mapping of the GSDs are contingent on an initial basic-level classification, such as when we use a logo to distinguish various makes of cars. Expertise for Cases 1 and 2 can be easily achieved through specification, often verbal, of the GSDs. Case 3 subordinates, which have furnished much of the grist for theorizing with “view-based” template models, requireone metric discriminations. Cases 1 and 2 account for the overwhelming majority of shape-based basic- and subordinate-level object classifications that people can and do make in their everyday lives. These classifications are typically made quickly, accurately, and with only modest costs of viewpoint changes. Whereas the activation of an array of multiscale, multiorientation filters, presumed to be at the initial stage of all shape process ing, may suffce for determining the similarity of the representations mediating recognition among Case 3 subordinate stimuli (and faces), Cases 1 and 2 require that the output of these flters be mapped to classifiers that make explicit the nonaccidental properties, parts, and relations specified by the GSDs.

1998

Humans use distance information to scale the size of objects. Earlier studies demonstrated changes in neural response as a function of gaze direction and gaze distance in the dorsal visual cortical pathway to parietal cortex.

These findings have been interpreted as evidence of the parietal pathway’s role in spatial representation. Here, distance-dependent changes in neural response were also found to be common in neurons in the ventral pathway leading to inferotemporal cortex of monkeys. This result implies that the information necessary for object and spatial scaling is common to all visual cortical areas.

1996

A number of recent successful models of face recognition posit only two layers, an input layer consisting of a lattice of spatial filters and a single subsequent stage by which those descriptor values are mapped directly onto an object representation layer by standard matching methods such as stochastic optimization. Is this approach sufficient for modeling human object recognition?

We tested whether a highly efficient version of such a two-layer model would manifest effects similar to those shown by humans when given the task of recognizing images of objects that had been employed in a series of psychophysical experiments. System accuracy was quite high overall, but was qualitatively different from that evidenced by humans in object recognition tasks. The discrepancy between the system’s performance and human performance is likely to be revealed by all models that map filter values directly onto object units. These results suggest that human object recognition (as opposed to face recognition) may be difficult to approximate by models that do not posit hidden units for explicit representation of intermediate entities such as edges, viewpoint invariant classifiers, axes, shocks and/or object parts.

1995

The strength of visual priming of briefly presented gray scale pictures of real world objects, measured by naming reaction times and errors, was independent of whether the primed picture of the object was presented in the same or different size than the original picture.

These findings replicate Biederman & Cooper’s (1992) results on size invariance in shape recognition, which were obtained with line drawings, and extend them to the domain of gray level images. Entry-level shape identification is based either predominantly on scale-invariant representations incorporating orientation and depth discontinuities which are well captured by line drawings, or both discontinuities and the representation derived from smooth gradual surface changes are scale invariant.